Master thesis 2025

Technical University of Applied Sciences Augsburg

Team members: Luisa Lentze, Clara Eckhardt

Photo & video documentation: Julia Heß, Olivia Nigl, Julia Herzog

As soon as the participant steps onto one of the stages, the system instantly recognizes him and generates a unique avatar that mirrors his movements in real time.

When the unser now opens his mouth, the avatar does the same and begins to sing like an opera singer – no own singing skills required!

The sound can be shaped through simple movements: hand positions control pitch and volume, while mouth shapes influence the vowels (A,E,O).

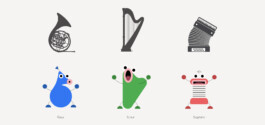

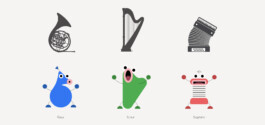

The design of the avatars is inspired by musical instruments, resulting in unique, rounded forms that feel friendly and approachable. The mouth is the central design element, as it creates the sound and shows vowels like A, E, and O. Bold and bright colors give the design an energetic and playful feeling.

Movement directly influences the avatars’ bodies: when the participant raises their arms to increase the pitch, the avatar stretches upward and becomes longer. When the arms move down, the avatar shrinks and becomes smaller. In this way, sound and motion are visually connected.

To find the most intuitive ways to modify sound and to guarantee the best experience, we did several user-testings. The best-rated interactions were included in the final design.

The installation was built using TouchDesigner. A Kinect camera captures full-body movement, while a webcam at each stage tracks facial expressions using a MediaPipe plugin by Torin Blankensmith and Dom Scott.

Both body and face data are processed and mapped in real time to the appearance of the avatars and the sound output. The audio is based on MP3 recordings from “Google Blob Opera”, which also served as a huge inspiration for the project.

Master thesis 2025

Technical University of Applied Sciences Augsburg

Team members: Luisa Lentze, Clara Eckhardt

Photo & video documentation: Julia Heß, Olivia Nigl, Julia Herzog

As soon as the participant steps onto one of the stages, the system instantly recognizes him and generates a unique avatar that mirrors his movements in real time.

When the unser now opens his mouth, the avatar does the same and begins to sing like an opera singer – no own singing skills required!

The sound can be shaped through simple movements: hand positions control pitch and volume, while mouth shapes influence the vowels (A,E,O).

The design of the avatars is inspired by musical instruments, resulting in unique, rounded forms that feel friendly and approachable. The mouth is the central design element, as it creates the sound and shows vowels like A, E, and O. Bold and bright colors give the design an energetic and playful feeling.

Movement directly influences the avatars’ bodies: when the participant raises their arms to increase the pitch, the avatar stretches upward and becomes longer. When the arms move down, the avatar shrinks and becomes smaller. In this way, sound and motion are visually connected.

To find the most intuitive ways to modify sound and to guarantee the best experience, we did several user-testings. The best-rated interactions were included in the final design.

The installation was built using TouchDesigner. A Kinect camera captures full-body movement, while a webcam at each stage tracks facial expressions using a MediaPipe plugin by Torin Blankensmith and Dom Scott.

Both body and face data are processed and mapped in real time to the appearance of the avatars and the sound output. The audio is based on MP3 recordings from “Google Blob Opera”, which also served as a huge inspiration for the project.